The Google I/O 2024 keynote was a jam-packed Gemini fest, and CEO Sundar Pichai was proper to describe it as its model of The Eras Tour – particularly, the “Gemini Era” – on the very prime.

The total keynote was about Gemini and AI; in truth, Google mentioned the latter 121 instances. From unveiling a futuristic AI assistant known as “Project Astra” that may run on a cellphone – and perhaps glasses, someday – to Gemini being infused into practically each service or product the corporate presents, AI was undoubtedly the large theme.

It was all sufficient to soften the thoughts of all however probably the most ardent LLM fanatic, so we have damaged down the 7 most necessary issues that Google unveiled and mentioned throughout its predominant I/O 2024 keynote.

1. Google dropped Project Astra – an “AI agent” for on a regular basis life

So it seems that Google does have a solution to OpenAI’s GPT-4o and Microsoft’s CoPilot. Project Astra, dubbed as an “AI agent” for on a regular basis life, is basically Google Lens on steroids and appears severely spectacular, ready to perceive, motive, and reply to reside video and audio.

Demoed on a Pixel cellphone in a recorded video, the consumer was seen strolling round an workplace, offering a reside feed of the rear digicam and asking Astra questions off the cuff. Gemini was viewing and understanding the visuals whereas additionally tackling the questions.

It speaks to the multi-modal and long-context within the backend of Gemini, which works in a jiffy to establish and ship a response shortly. In the demonstration, it knew what a particular a part of a speaker was and will even establish a neighborhood in London. It’s additionally generative as a result of it shortly created a band identify for a cute pup subsequent to a stuffed animal (see the video above).

It received’t be rolling out instantly, however builders and press like us at TechRadar will get to strive it out at I/O 2024. And whereas Google didn’t make clear, there was a teaser of glasses for Astra, which could imply Google Glass might make a comeback.

Still, at the same time as a demo throughout Google I/O, it is severely spectacular and probably very compelling. It might supercharge smartphones and the present assistants we have now from Google and even Apple. Furthermore, it additionally reveals off Google’s true AI ambitions, a software that may be immensely useful and no chore in any respect to use.

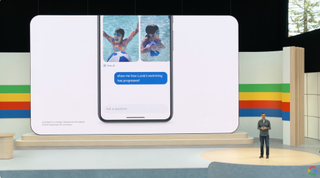

2. Google Photos obtained a useful AI enhance from Gemini

Ever wished to shortly discover a particular picture you captured sooner or later within the distant previous? Maybe it is a observe from a beloved one, an early picture of a canine as a pet, and even your license plate. Well, Google is making that want a actuality with a significant replace to Google Photos that fuses it with a Gemini. This offers it entry to your library, lets it search it, and simply delivers the consequence you’re searching for.

In a demo on stage, Sundar Pichai revealed that you may ask it on your license plate, and Photos will ship a picture displaying it and the digits/characters that make up your plate. Similarly, you possibly can ask for images of when your little one realized to swim together with any extra specifics. It ought to make even probably the most disorganized picture libraries a bit simpler to search.

Google has dubbed this function “Ask Photos,” and can roll it out to all customers within the “coming weeks”. And it’s going to nearly actually turn out to be useful, and make people who do not use Google Photos a bit jealous.

3. Your child’s homework simply obtained rather a lot simpler thanks to NotebookLM

All dad and mom will know the horror of attempting to assist children with homework; for those who ever knew about these items previously, there’s no approach the data nonetheless lurks inside your mind 20 years later. But Google might have simply made the duty rather a lot simpler, thanks to an improve to its NotebookLM note-taking app.

NotebookLM now has entry to Gemini 1.5 Pro, and based mostly on the demo given at I/O 2024, it’ll now be a greater instructor than you’ve ever been. The demo confirmed Google’s Josh Woodward loading up a pocket book stuffed with notes a couple of studying subject – on this case, science. With a single button press, he was ready to create an in depth studying information, with additional outputs together with quizzes and FAQs, all pulled from the supply materials.

Impressive – however it was about to get rather a lot higher. A brand new function – nonetheless a prototype for now – was ready to output the entire content material as audio, basically making a podcast-style dialogue. What’s extra, the audio featured a couple of speaker, chatting in regards to the subject naturally in a approach that may undoubtedly be extra useful than a annoyed mother or father trying to play the position of instructor.

Woodward was even ready to interrupt and ask a query, on this case “give us a basketball example” – at which level the AI switched tack and got here up with intelligent metaphors for the subject, however in an accessible context. The dad and mom on the TechRadar crew are itching to check out this one.

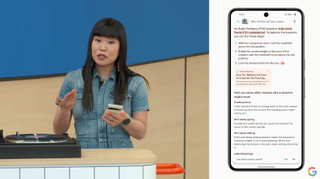

4. You’ll quickly have the option to search Google with a video

In a wierd on-stage demo with a report participant, Google confirmed off a really spectacular new search trick. You can now a report a video, and search it to get outcomes, and hopefully a solution.

In this case, it was Googler who was questioning how to use a report participant; she hit report to movie the unit in query whereas asking one thing after which despatched it off. Google labored its search magic and offered a solution in textual content, which might be learn aloud. It’s a wholly new approach to search, like Google Lens for video, and in addition distinctly completely different from the forthcoming Project Astra on a regular basis AI, as this wants to be recorded after which searched versus working in real-time.

Still, it’s a part of a Gemini and generative AI infusion with Google Search, aiming to preserve you on that web page and make it simpler to get solutions. Before this demo of looking out with video, Google confirmed off a brand new generative expertise for recipes and eating. This permits you to seek for one thing in pure language and get recipes and even eatery suggestions on the outcomes web page.

Simply, Google’s going full throttle with generative AI in search, each for outcomes and varied methods to get the outcomes.

We’ve been marveling on the creations of OpenAI’s text-to-video software Sora for the previous few months, and now Google is becoming a member of the generative video occasion with its new software known as Veo. Like Sora, Veo can generate minute-long movies in 1080p high quality, all from a easy immediate.

That immediate can embody cinematic results, like a request for a time-lapse or aerial shot, and the early samples look spectacular. You don’t have to begin from scratch both – add an enter video with a command, and Veo can edit the clip to match your request. There’s additionally the choice to add masks and tweak particular components of a video too.

The dangerous information? Like Sora, Veo isn’t extensively obtainable but. Google says it’ll be obtainable to choose creators by means of VideoFX, considered one of its experimental Labs options, “over the coming weeks.” It might be some time till we see a large rollout, however Google has promised to carry the function to YouTube Shorts and different apps. And that’ll have Adobe shifting uneasily in its AI-generated chair.

6. Android obtained an enormous Gemini infusion

Much like Google’s “Circle to Search” function lays on prime of an software, Gemini is now being built-in into the core of Android to combine together with your movement. As demonstrated, Gemini can now view, learn, and perceive what’s in your cellphone’s display screen, letting it anticipate questions on no matter you view.

So it may possibly get the context of a video you are watching, anticipate a summarization request when viewing a prolonged PDF, or be prepared for myriad questions on an app you are in. Having a content-aware AI baked right into a cellphone’s OS is not a foul factor by any stretch and will show tremendous helpful.

Alongside Gemini being built-in on the system stage, Gemini Nano with Multimodality will launch later this 12 months on Pixel gadgets. What will it allow? Well, it ought to velocity issues up, however the landmark function, for now, is Gemini listening to calls and having the ability to provide you with a warning in actual time if it is spam. That’s fairly cool and builds upon name screening, a long-standing function of Pixel telephones. It’s poised to be sooner and course of extra on-device reasonably than sending it off to the cloud.

7. Google Workspace will get rather a lot smarter

Workspace customers are getting a treasure trove of Gemini integrations and helpful options that would make a huge impact day by day. Within Mail, thanks to a brand new aspect panel on the left, you possibly can ask Gemini to summarize all of the latest conversations with a colleague. The result’s then summarized with bullet factors highlighting an important points.

Gemini in Google Meet can provide the highlights of a gathering or what people on the decision is perhaps asking. You would now not want to take notes throughout that decision, which might show useful if it’s prolonged. Within Google Sheets, Gemini can assist make sense of knowledge and course of requests like pulling a particular sum or knowledge set.

The digital teammate “Chip” could be the most futuristic instance. It can reside in a G-chat and be known as up for varied duties or queries. While these instruments will make their approach into Workspace, seemingly by means of Labs first, the remaining query is when they are going to arrive for normal Gmail and Drive prospects. Considering Google’s strategy of AI for all and pushing it so exhausting with search, it’s seemingly a matter of time.